I Tested Local LLMs to Triage My Gmail. Here's What Worked.

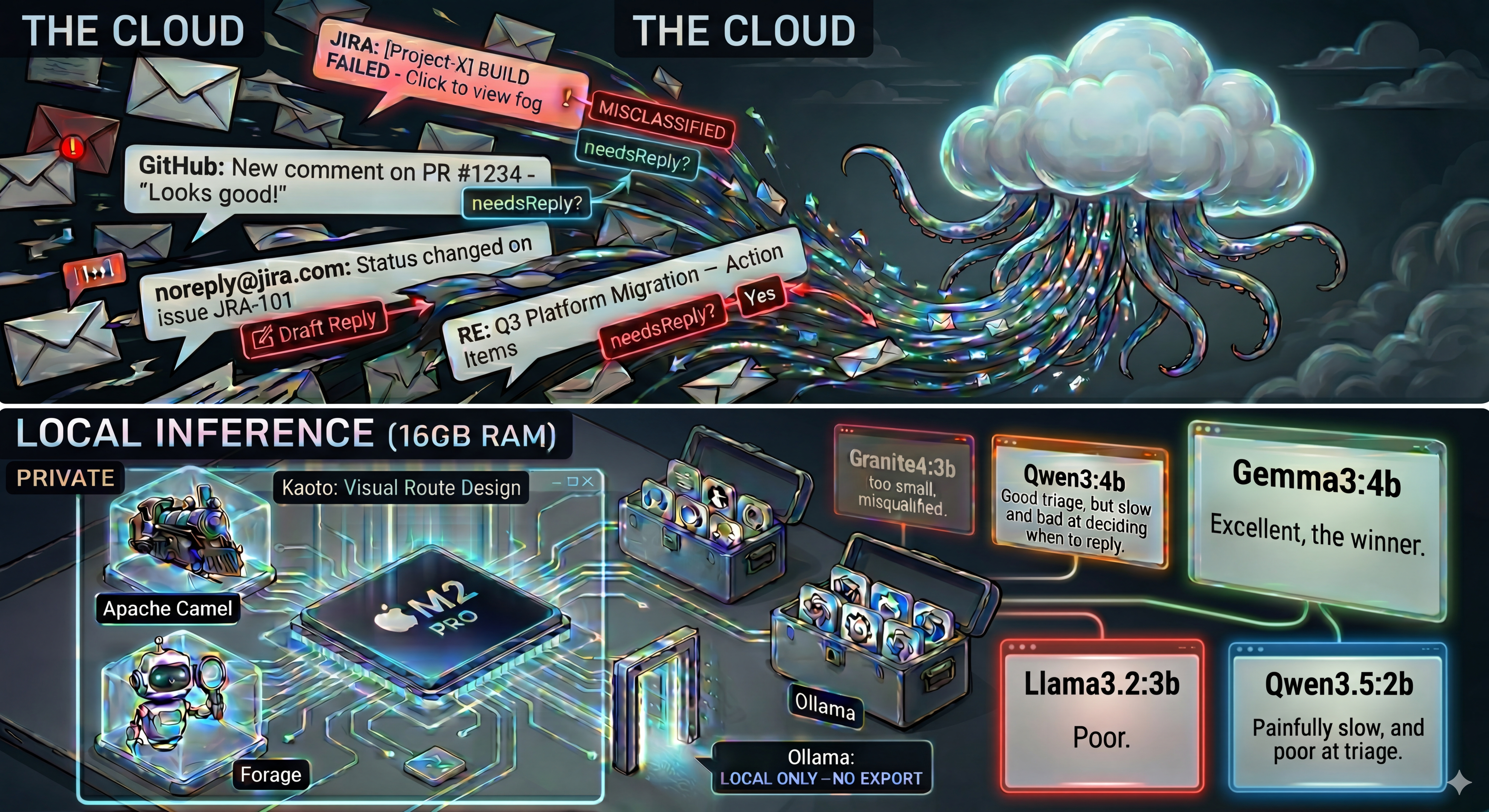

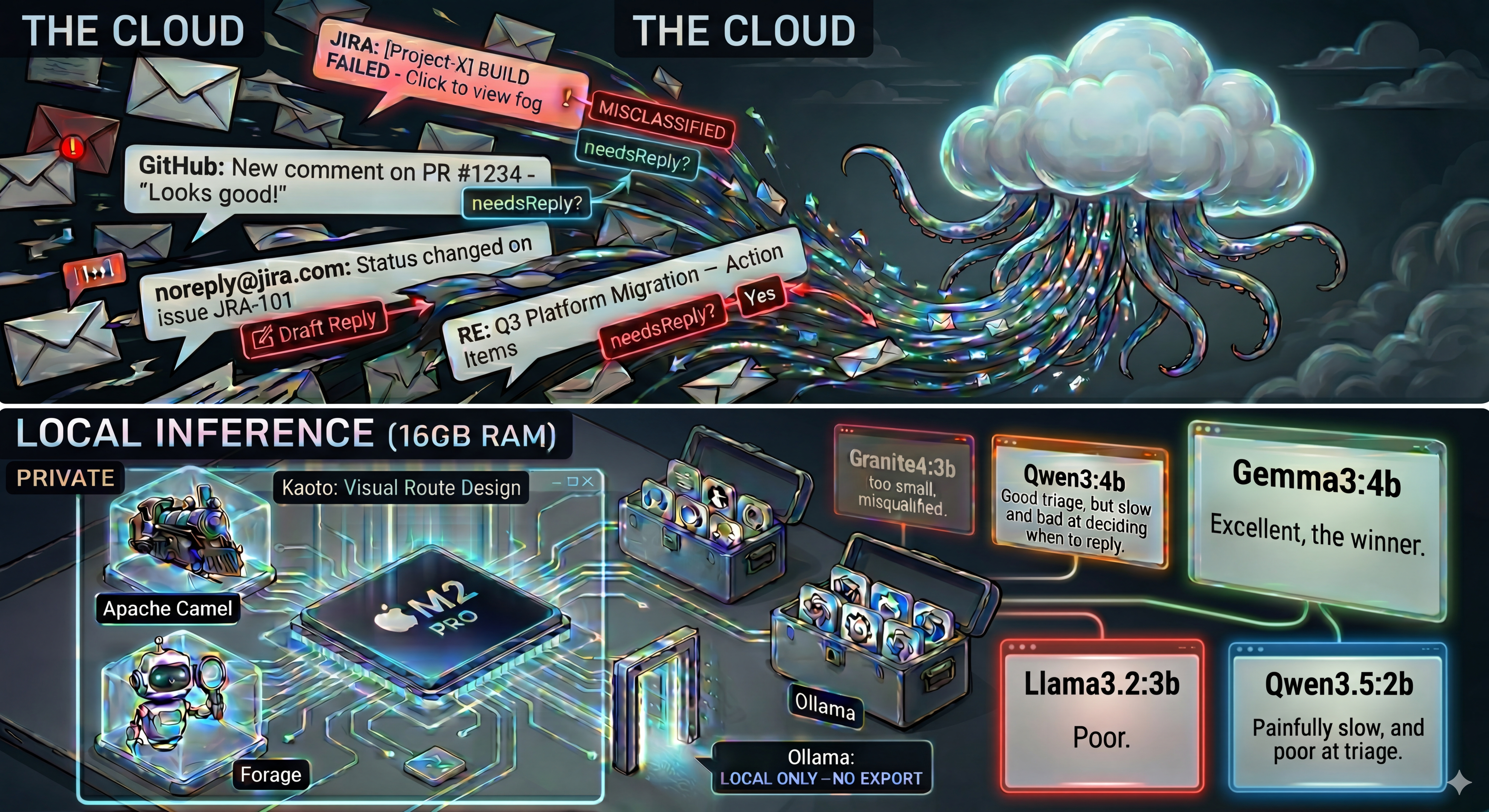

I built an AI agent that triages my Gmail inbox, classifies emails and drafts replies. All the AI runs locally on my Apple M2 Pro with 16 GB of RAM. The Camel Google Mail component handles reading and organizing emails, but all the smart stuff (classifying, deciding what to reply to, drafting replies) runs entirely on my machine. No email content is ever sent to a cloud AI service.

Here's how I picked the right model.

Image generated by Gemini

Image generated by Gemini

The Goal

I wanted an agent that could:

- 📬 Read my unread Gmail emails

- 🏷️ Classify each one into categories: URGENT, ACTION_REQUIRED, INFORMATIONAL, SUSPICIOUS, PURCHASE, or SHIPPING

- 📂 Move it to the corresponding Gmail label automatically

- ✍️ Decide if the email needs a reply, and if so, draft one

The stack:

- Apache Camel for integration (Gmail and Ollama connectors)

- Forage to configure the LangChain4j AI beans through properties files

- Ollama for local LLM inference

- Kaoto to design the routes visually

I'll share the full source code in a follow-up post.

The Constraint: 16 GB of RAM

My MacBook has an M2 Pro chip with 16 GB of unified memory. Sounds like a lot, right? But once macOS, Ollama, and the JVM are running, there's not much headroom left for a large model. I needed something that fits in memory, runs fast, and is smart enough to make good decisions about my emails.

My Strategy

I picked Granite and Qwen as my starting point because they're the local models most used by my colleagues and friends.

The Model Journey

Attempt 1: granite4:3b

I started with IBM's Granite 4 at 3B parameters. It's the largest Granite 4 model available on Ollama. As an IBM employee, Granite was a natural first choice. It ran fast.

But the 3B model simply didn't have enough capacity for this task. Classification was unreliable. It would misclassify emails frequently. Worse: it would draft replies to noreply@ addresses, JIRA notifications, and GitHub alerts. Imagine sending "Thanks for letting me know!" to a CI/CD build notification.

My conclusion at this point: the 3B model isn't big enough for this task. I moved on to Qwen.

Attempt 2: qwen3.5 (9b, 7b, 4b, 2b)

Following my strategy of starting with the latest, I went with Qwen3.5. I started big with the 9B model, then tried 7B, 4B, and 2B. They all ran on my machine. But the response time was several minutes per email. For comparison, Granite 4 had answered in barely a few seconds. This was unusable for an email triage agent.

Frustrated, because my friends use Qwen models regularly and swear by them, I decided to really try to make the smallest one work. I launched the 2B model with thinking mode disabled: ollama run qwen3.5:2b --think=false. That made it functional, but still painfully slow. And the triage quality was poor. It confused ACTION_REQUIRED with INFORMATIONAL. An email with the subject "Q3 Platform Migration - Action Items" got tagged as INFORMATIONAL. That's the one category you absolutely cannot get wrong.

Attempt 3: qwen3:4b

I had heard genuinely good things about Qwen3 from several people. So I went back one generation and tried it at 4B.

Triage quality was a clear improvement. It could distinguish between URGENT and ACTION_REQUIRED reliably. It stopped replying to noreply@ senders. But the Qwen models were still noticeably slower than Granite.

It would set needsReply=true for ACTION_REQUIRED emails where the action was to do something but nobody was asking for an email reply back. For example, an email saying "@All: Please review this PR by tomorrow". The action is on GitHub, not in a reply to the sender.

Qwen3 also has a "thinking" mode. It reasons through the problem in <think>...</think> tags before answering. For a simple classification task, this doubled the inference time without improving accuracy. The model itself would have been fast enough at 4B, but the thinking tokens made it noticeably slower.

Attempt 4: gemma3:4b

At this point, Gemma 3 was not part of my original plan. I searched online for models that worked well on constrained hardware, and gemma3:4b kept coming up. I decided to give it a try.

Everything clicked. 🎉 I was relieved.

It was fast. As fast as Granite. No thinking overhead. Gemma3 gives direct answers. A big contrast with the Qwen family.

It correctly tagged the Q3 Platform Migration email as ACTION_REQUIRED. Unlike Qwen3.5:2b which had tagged it as INFORMATIONAL.

And the hardest decision, needsReply, it got right too. This is what I had been struggling with across all the other models. The same PR review email has action items ("@All: Please review this PR") but nobody is asking for an email reply. Gemma3 correctly set needsReply=false. Meanwhile, direct personal emails like "Hey Zineb, are you free for lunch Wednesday?" or "Devoxx talk - need your confirmation" correctly triggered needsReply=true and a draft reply was generated.

I also tested gemma3:1b and gemma3:12b to confirm that 4B was the sweet spot.

The 1B model was fast, just like the 4B. But it hallucinated on replies, the same problem I had seen with granite4:3b. It would draft replies to emails that clearly didn't need one. At 1B, the model simply doesn't have enough capacity to make nuanced decisions about needsReply.

The 12B model never even got to run. Ollama downloaded the 8.1 GB model file, but the runner process crashed immediately with a 500 error. On a 16 GB machine with macOS and the JVM already in memory, there just isn't enough headroom for a model that size.

So 4B is confirmed as the sweet spot: large enough to reason well, small enough to actually run.

Attempt 5: phi4-mini (3.8b)

After Gemma3, I wanted to see if Microsoft's Phi-4 Mini could compete. At 3.8B parameters, it's close to Gemma3's 4B. Phi-4 Mini also has a reasoning variant, but after my experience with Qwen's thinking mode, I already knew that reasoning overhead wasn't suited for this use case.

The standard phi4-mini ran well. But both triage and reply decisions were unreliable. It classified a simple "Lunch on Wednesday?" email from a colleague as SUSPICIOUS instead of INFORMATIONAL. And it set needsReply=true for the Q3 Platform Migration email, the same mistake Qwen3 made. The action items in that email are tasks to do, not a conversation to reply to.

Gemma3:4b remains the clear winner.

Attempt 6: llama3.2:3b

Meta's Llama 3.2 at 3B is the largest small Llama model available on Ollama. Llama 3.3 and Llama 4 only come in 70B+, so this was the only option.

This was the worst experience of all. It hallucinated replies to everything, including test emails with just "test" as the subject. It also triaged those test emails as ACTION_REQUIRED. At 3B parameters, the model seems to default to "this looks important, reply to it" for almost any input. It couldn't distinguish meaningful content from noise.

The Final Comparison

| Model | Triage | needsReply | Speed | Verdict |

|---|---|---|---|---|

| granite4:3b | Poor | Poor (replied to bots) | Fast | Too small to reason well |

| qwen3.5:2b-9b | Poor (2b) | Not tested | Very slow (minutes) | Too slow on 16 GB |

| qwen3:4b | Good | Mediocre (replied when no response expected) | Slow | Good triage, too slow |

| gemma3:1b | Untested | Poor (hallucinated replies) | Fast | Too small, same reply issues as granite4:3b |

| gemma3:4b | Excellent | Excellent | Fast | The winner |

| gemma3:12b | N/A | N/A | Crashed | Too large for 16 GB, runner process killed |

| phi4-mini:3.8b | Poor (misclassified safe emails) | Poor (replied when no response expected) | Fast | Both triage and replies unreliable |

| llama3.2:3b | Poor (test emails tagged ACTION_REQUIRED) | Very poor (replied to everything) | Fast | Worst overall, hallucinated on nearly all decisions |

Conclusion

A few takeaways from this experiment 👇

You need at least 4B parameters for this kind of task. Email triage requires nuanced reasoning. Distinguishing "this email has action items" from "this email expects a reply from me" is too subtle for smaller models. They're fast, but they get it wrong too often. I didn't try going above 4B due to the constraints of my current machine, so 4B is my practical sweet spot for now.

Thinking mode is not adapted for constrained machines. On a 16 GB laptop, the overhead of thinking tokens makes models painfully slow without improving classification accuracy. For simple tasks like email triage, direct-answer models are the way to go.

Try models you haven't heard of. My best Qwen result came from going back one generation to Qwen3. And my winner, Gemma3, wasn't even on my radar until I searched online.

In my case, the prompt I had optimized and Gemma3's default configuration just clicked together for this use case. A different prompt or a different task might favor a completely different model.

What's Next

In my next blog post, I'll share the full source code and walk through the Camel routes, the prompt engineering, and how to set it up yourself.

Tried other models on constrained hardware? I'm curious what worked for you.